The Electronic Frontier Foundation (EFF), a leading global non-profit defending digital privacy, free speech, and innovation, has formally implemented a comprehensive policy governing the use of Large Language Models (LLMs) in its open-source software projects. This strategic move addresses the growing trend of AI-assisted coding, emphasizing the primacy of human oversight and technical comprehension over the mere speed of production. By requiring contributors to maintain a deep understanding of their submitted code and mandating that all documentation and comments remain human-authored, the EFF seeks to safeguard the integrity of its software ecosystem against the inherent risks of automated generation.

Defining the New Standard for AI-Assisted Contributions

The core of the EFF’s new policy is a shift in focus from quantitative output to qualitative excellence. In an era where tools like GitHub Copilot, OpenAI’s ChatGPT, and Anthropic’s Claude can generate vast quantities of code in seconds, the EFF has identified a critical need to prioritize software durability and security. The organization’s directive explicitly states that while it does not outright ban LLM tools—acknowledging their pervasive role in modern development—it imposes strict guardrails on their application.

Under the new guidelines, any contributor utilizing AI assistance must be prepared to defend and explain every line of code submitted. This is designed to prevent "code dumping," where developers submit large volumes of machine-generated logic without fully grasping the underlying mechanics. Furthermore, the policy draws a firm line at the communicative aspects of software development: documentation, inline comments, and commit messages must be written by humans. This ensures that the intent and context of the software remain grounded in human reasoning, which is vital for long-term maintenance and community collaboration.

The Technical and Chronological Evolution of AI in Open Source

The integration of LLMs into software development has followed a rapid and often volatile timeline. The release of GitHub Copilot in 2021 marked the first major milestone, sparking intense debate within the open-source community regarding the ethics of training models on public repositories. By late 2022, the public release of ChatGPT accelerated the adoption of generative AI, leading to a surge in AI-generated pull requests across platforms like GitHub and GitLab.

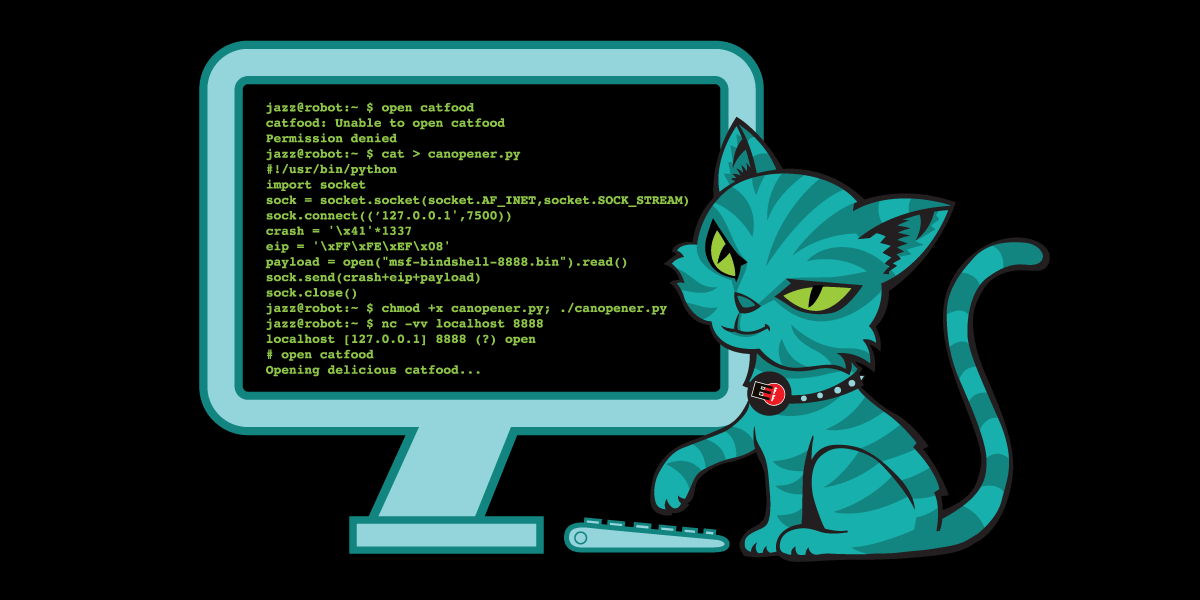

However, by 2023 and 2024, the "honeymoon phase" of AI coding began to face significant scrutiny. Maintainers of major open-source projects reported an influx of low-quality submissions that appeared correct on the surface but failed under edge cases or introduced subtle security vulnerabilities. The EFF’s policy serves as a formal response to this trajectory, positioning the organization alongside other major tech entities that are beginning to recognize the "technical debt" associated with unverified AI output.

The chronology of this policy also reflects the EFF’s broader history of software advocacy. For decades, the EFF has maintained critical tools such as Certbot (which secures millions of websites via Let’s Encrypt) and Privacy Badger. The high stakes of these tools—often involving cryptographic security and user privacy—mean that even a single "hallucinated" line of code could have catastrophic consequences for global digital infrastructure.

Supporting Data: The Hidden Costs of LLM-Generated Code

The EFF’s decision is supported by a growing body of empirical evidence suggesting that AI-generated code is a double-edged sword. Research from various cybersecurity firms and academic institutions has highlighted several recurring issues:

- The Hallucination Factor: Studies have shown that LLMs can "hallucinate" software libraries or functions that do not exist. In a phenomenon known as "AI package confusion," attackers have begun registering malicious packages with names that AI models frequently hallucinate, leading unsuspecting developers to unknowingly import malware into their projects.

- Security Vulnerabilities: A 2023 study by Stanford University researchers found that developers who used AI assistants were more likely to produce insecure code compared to those who did not, yet they were more confident in the security of their results. This "false confidence" is a primary concern for the EFF.

- Reviewer Fatigue: Data from open-source maintainers indicates that reviewing AI-assisted code can take up to 50% longer than reviewing human-written code. Because the code often "looks" correct but may contain logical gaps or "bloat," maintainers are forced to perform deep refactoring rather than simple reviews, placing an unsustainable burden on small, resource-constrained teams.

- The "Stochastic Parrot" Effect: Since LLMs function by predicting the next most likely token based on training data, they often replicate outdated patterns or "copy-paste" bugs found in legacy repositories, scaling errors at a rate human developers rarely achieve.

Stakeholder Reactions and Ethical Considerations

While the EFF’s policy is internal to its projects, it reflects a growing consensus among ethical developers. Reactions from the broader open-source community have been largely supportive, with many maintainers expressing relief that a major advocacy group is legitimizing the "human-first" approach.

Critics of blanket bans on AI argue that such measures are impossible to enforce and may stifle innovation. The EFF addresses this by opting for a disclosure-based model. By requiring contributors to disclose when LLM tools are used, the EFF fosters a culture of transparency. This allows maintainers to apply a higher level of scrutiny to those specific contributions, effectively triaging their limited time and resources.

Beyond the technical hurdles, the EFF points to a "climate of companies speedrunning their profits over people." This critique targets the aggressive commercialization of AI by "Big Tech" firms that often prioritize market share over the ethical implications of their tools. The EFF’s stance is that AI tools are not neutral; they are products of an ecosystem that often ignores privacy, data sovereignty, and the environmental cost of the massive energy consumption required to train and run these models.

Broader Impact and Industry Implications

The implementation of this policy by the EFF is likely to serve as a blueprint for other non-profit and security-focused software organizations. As the industry moves toward "AI-augmented" workflows, the definition of what constitutes a "contribution" is being redefined. The EFF’s insistence on human-authored documentation is particularly significant, as it protects the "tribal knowledge" of the open-source community—knowledge that is often lost when code is generated by a black-box algorithm.

Furthermore, the policy aligns with the EFF’s long-standing position on copyright and user rights. The organization has consistently argued that expanding copyright law to cover AI-generated content is an impractical and potentially harmful solution. Instead, the EFF advocates for procedural and ethical standards, such as the one introduced here, to manage the challenges of AI. This approach focuses on the responsibility of the developer rather than the ownership of the machine’s output.

The environmental and ethical dimensions also play a role in the broader implications of this move. By discouraging the thoughtless use of LLMs, the EFF is indirectly addressing the climatic concerns associated with the AI industry. The massive compute power required for every AI query adds up to a significant carbon footprint—a fact the EFF highlights as a continuation of harmful tech industry practices.

Conclusion: Innovation with Accountability

The Electronic Frontier Foundation’s new policy on LLM-assisted contributions is a calculated attempt to balance the benefits of innovation with the necessity of accountability. In the fast-evolving landscape of software engineering, the temptation to use AI as a shortcut is high, but the EFF’s mandate serves as a reminder that in the world of open-source, quality and trust are the ultimate currencies.

By requiring contributors to be the masters of their own code and ensuring that the human element remains central to documentation and communication, the EFF is reinforcing the foundational principles of the open-source movement. This policy does not merely govern code; it preserves the integrity of the collaborative process, ensuring that the software protecting our digital rights remains transparent, secure, and, most importantly, understood by the people who build and maintain it. As other organizations observe the results of this policy, it may well become the standard for responsible software development in the age of artificial intelligence.