Following widespread complaints that its flagship platform, Facebook, had devolved into an "AI slop hellscape," Meta on Friday unveiled a suite of new tools designed to detect and combat impersonation, alongside updated creator guidelines that more precisely define what constitutes "original content." This strategic pivot signifies Meta’s intensified commitment to restoring content quality and fostering a more authentic digital environment for its vast user base and creator community, responding to a growing chorus of criticism from users and industry observers alike who have voiced concerns over the proliferation of low-quality, repetitive, and algorithmically generated content.

The Genesis of the "AI Slop Hellscape"

The term "AI slop" has emerged from a growing user frustration with the deluge of low-quality, often AI-generated or recycled content flooding social media feeds. For months, users across various platforms, including Facebook, Reddit, and X (formerly Twitter), have reported an alarming increase in posts characterized by their generic nature, poor grammar, uncanny valley imagery, and lack of genuine human insight. This phenomenon is largely attributed to the accessibility of advanced generative AI tools, which allow for the rapid production of text, images, and even video with minimal human effort. While AI offers immense creative potential, its misuse has led to a degradation of the user experience, as authentic content struggles to compete with the sheer volume of algorithmically optimized, yet ultimately hollow, productions.

On Facebook, this issue became particularly pronounced, leading many to label their feeds as an "AI slop hellscape." The core problem lay in the algorithm’s struggle to differentiate between genuinely creative, human-produced content and mass-generated, often spammy, material. This not only diluted the overall quality of the platform but also undermined the efforts of legitimate creators whose original work was getting lost in the noise. The negative impact extended beyond user experience, touching upon issues of trust, information veracity, and the economic viability of content creation on the platform. If users cannot trust the authenticity or quality of content, their engagement wanes, directly impacting creators’ ability to monetize their work and Meta’s advertising revenue.

A Chronology of Meta’s Counter-Offensive

Meta’s current announcements are not an isolated response but rather the latest phase in a concerted, multi-pronged effort initiated well over a year ago. Recognizing the escalating problem, the company first announced a comprehensive crackdown on spammy and unoriginal content in late 2024. This initial strategy targeted practices such as the repeated reuse of others’ photos, videos, or text without significant transformation or added value. The stated goal was clear: to elevate original creator content within its feeds and actively push back against the burgeoning tide of AI-generated "slop" and other low-quality posts that were demonstrably eroding Facebook’s reputation and user satisfaction.

Throughout 2025, Meta’s content moderation teams and algorithmic developers worked to implement these changes. This involved refining machine learning models to identify patterns indicative of unoriginal or low-value content, increasing human review capacity, and adjusting feed ranking signals to favor genuine creativity. The company’s internal reports from this period indicated a steady, albeit challenging, battle against an ever-evolving adversary, as malicious actors continuously adapted their tactics to bypass detection systems.

The impact of these earlier efforts began to materialize significantly in the latter half of 2025. Meta recently reported that these interventions led to a notable improvement in content consumption metrics. Specifically, during the second half of 2025, views of and time spent watching original content on Facebook approximately doubled when compared to the same period in the preceding year. This substantial increase underscores the efficacy of Meta’s initial steps in prioritizing authentic content and suggests a positive shift in user engagement patterns, indicating that users actively prefer and spend more time with higher-quality, original material when it is made more discoverable.

Beyond "slop," impersonation has been another critical challenge. The proliferation of fake accounts mimicking legitimate creators, public figures, or brands not only defrauds users but also severely damages the reputation and monetization potential of genuine entities. Meta’s ongoing efforts to combat this have also yielded significant results. The company reported the removal of an astonishing 20 million impersonating accounts throughout 2025. Furthermore, there was a recorded 33% drop in the number of impersonation reports specifically targeting large creators, suggesting that while the problem persists, the platform’s detection and removal mechanisms are becoming more effective, particularly for high-profile targets.

Enhanced Tools for Creator Protection: A New Frontier

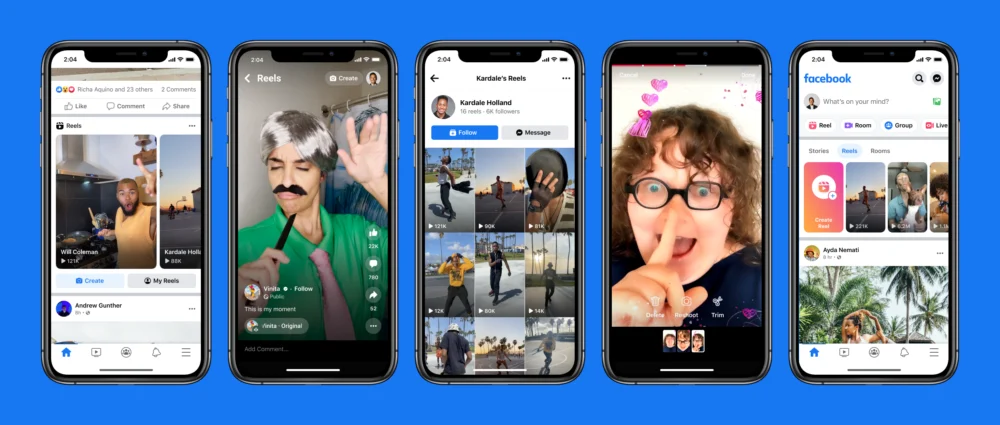

The latest announcement introduces further enhancements to Facebook’s content protection tools, specifically designed to empower creators in safeguarding their intellectual property. These tools allow creators to take proactive action when their content, particularly short-form videos known as Reels, is detected being republished across Facebook’s platforms by impersonators or unauthorized users.

Previously, creators had access to a central dashboard where they could flag infringing content. With the upcoming update, Meta aims to streamline and simplify this reporting process even further. The new system will allow creators to submit multiple reports from a single, centralized location, significantly reducing the administrative burden and accelerating the content removal process. This is a critical development, as the speed of detection and removal is paramount in mitigating the spread of unauthorized content and protecting a creator’s brand and revenue streams.

However, a crucial limitation of the current tool, as acknowledged by Meta, is its primary focus on matching duplicate content. This means it excels at identifying exact copies or near-identical re-uploads of a creator’s work. It is not yet fully equipped to detect the unauthorized use of a creator’s likeness – a growing concern in the age of sophisticated deepfakes and AI-generated avatars. The ability to create convincing digital replicas of individuals poses a complex challenge that extends beyond simple content duplication, requiring more advanced AI detection capabilities and potentially new legal frameworks. Addressing this gap remains a significant area for future development and is critical for truly comprehensive creator protection.

Redefining "Original Content": New Guidelines and Their Impact

Central to Meta’s strategy is a clearer, more robust definition of what constitutes "original" content. As part of these recent changes, Facebook has updated its content guidelines to provide explicit criteria. The new guidelines categorize content as original if it is "filmed or produced directly by a creator." This straightforward definition aims to prioritize genuine, first-party creations.

Moreover, the guidelines now explicitly embrace "reels that remix other content or use overlays to present something new — like analysis, discussion, or new information." This is a significant clarification that acknowledges the evolving nature of digital creativity, where remix culture and transformative works are increasingly prevalent. It allows for derivative content that adds substantial value, context, or commentary to be recognized as original, fostering a dynamic and interactive content ecosystem rather than stifling it.

Conversely, content that involves only "minor edits to a creator’s work or is duplicative of that" will be deemed unoriginal and subsequently deprioritized by Facebook’s algorithms. This directly targets low-value content practices such as simple re-uploads, or superficial alterations like adding basic borders, generic captions, or slight cropping. The message is unequivocal: mere cosmetic changes will no longer be sufficient to differentiate unoriginal content from its source, and such content will see significantly reduced reach and visibility. This updated policy aims to weed out the vast quantities of recycled content that contribute to the "slop" phenomenon, encouraging creators to invest in genuinely new and engaging material.

The implications for creators are substantial. Those who consistently produce original, high-quality content or genuinely transformative remixes are likely to see increased visibility and engagement. Conversely, creators relying on re-uploading or minimally altering existing content will find their reach severely curtailed, potentially impacting their ability to build an audience or monetize their presence on the platform. This move is expected to drive a shift towards greater authenticity and creativity, rewarding genuine effort and discouraging content arbitrage.

Broader Industry Challenges: A Shared Battle Against Digital Deception

Meta is far from alone in grappling with the profound impact of AI technology on its digital community. The challenges of distinguishing authentic human creativity from AI-generated simulations, and protecting individuals from digital impersonation, are pervasive across the social media landscape.

Just this week, YouTube, another dominant player in digital content, announced its own expansion of AI deepfake detection tools. Significantly, YouTube’s enhanced focus is on protecting "politicians, public figures, and journalists." This move highlights the particularly sensitive nature of deepfakes when applied to individuals whose public image and credibility are paramount, with potential implications for democratic processes, public trust, and individual safety. The fact that two of the world’s largest content platforms are simultaneously rolling out advanced AI detection and content authenticity measures underscores the urgency and scale of this global challenge.

The rise of AI has democratized content creation but also content manipulation. From synthetic media that blurs the lines between reality and fiction to sophisticated phishing attempts using AI-generated voices, the digital environment is becoming increasingly complex to navigate. Platforms like Facebook and YouTube are now on the front lines of a battle for digital authenticity, where the integrity of information and the trust of their users are at stake.

Implications for Creators, Users, and the Platform

For Creators: The updated guidelines and enhanced tools offer a mixed but generally positive outlook. For original content creators, the prioritization of their work means greater visibility, increased engagement, and potentially higher monetization opportunities. The improved impersonation tools provide a much-needed layer of protection for their intellectual property and brand. However, it also means a heightened expectation for originality and quality. Creators who have relied on curating or slightly modifying existing content will need to pivot their strategies towards producing genuinely new material. The platform is signaling a clear shift towards rewarding genuine creative effort over mere aggregation.

For Users: The ultimate goal of these changes is to improve the user experience. By reducing the volume of "AI slop" and unoriginal content, users should find their feeds more engaging, relevant, and trustworthy. The ability to discover authentic voices and high-quality content more easily could lead to increased time spent on the platform and a renewed sense of community. The reduction in impersonation also enhances user safety and confidence in the identities they interact with online.

For Meta and Facebook: These initiatives are critical for Facebook’s long-term viability as a premier creator platform and a trusted source of content. If unoriginal content and AI-generated "slop" were allowed to continue unchecked, they would inevitably drown out original voices, reduce creators’ ability to monetize, and alienate users. This would lead to declining engagement, a loss of competitive edge against platforms that successfully manage content quality, and ultimately, a detrimental impact on Meta’s advertising revenue, which is the lifeblood of its business model. By proactively addressing these issues, Meta aims to safeguard its reputation, ensure the platform remains a preferred destination for creators, and secure its position in the evolving digital landscape.

The reported doubling of views and time spent on original content within a year is a strong indicator that these efforts are already yielding positive results, validating Meta’s strategic direction. It suggests that investing in content quality and creator protection is not just a regulatory or ethical imperative but also a sound business decision.

Looking Ahead: The Evolving Landscape of Digital Content

The battle against "AI slop" and digital impersonation is an ongoing one, characterized by an arms race between platforms’ detection capabilities and the ingenuity of those seeking to exploit vulnerabilities. As AI technology continues to advance, so too will the sophistication of both content generation and manipulation. Meta’s latest updates represent a significant step forward, but they are unlikely to be the final word.

Future challenges will include developing even more nuanced AI detection models that can differentiate between genuinely transformative use and superficial alterations, especially as AI-assisted creativity becomes more mainstream. Addressing the "likeness" issue, where AI can convincingly mimic individuals, will also require innovative technological solutions and potentially new ethical and legal frameworks. The collaboration between platforms, researchers, and policymakers will be crucial in navigating these complex issues and shaping a safer, more authentic digital future. For now, Meta’s renewed commitment to original content and creator protection offers a promising outlook for the quality of content on one of the world’s largest social networks.