After widespread complaints that Facebook had devolved into an "AI slop hellscape," Meta on Friday announced significant new tools to detect impersonation and updated its creator guidelines to provide a clearer definition of what constitutes "original content." This strategic pivot underscores the company’s intensified efforts to combat the proliferation of low-quality, AI-generated, and unoriginal material that has plagued its platform, threatening user experience and the viability of its burgeoning creator economy. The move is a direct response to a growing chorus of dissatisfaction from users and content creators alike, who have witnessed their feeds increasingly inundated with repetitive, derivative, or fraudulently presented content.

The Genesis of "AI Slop": A Growing Digital Dilemma

The digital landscape has been irrevocably transformed by the rapid advancements in generative artificial intelligence. Tools capable of producing text, images, and video with remarkable speed and apparent authenticity have unleashed a deluge of content onto platforms like Facebook. While many celebrate the creative potential of AI, its misuse has led to a degradation of content quality, often referred to pejoratively as "AI slop." This phenomenon encompasses everything from hastily generated articles and memes to algorithmically rehashed videos and images, all designed to capture fleeting attention without offering genuine value. For Facebook, a platform that thrives on user engagement and original expression, the unchecked spread of such content poses an existential threat. It erodes user trust, dilutes the impact of genuine creators, and ultimately diminishes the platform’s appeal as a destination for meaningful interaction and discovery. The stakes are particularly high for Meta, which has heavily invested in fostering a vibrant creator ecosystem, recognizing that original content is the lifeblood of sustained engagement and advertising revenue.

The proliferation of "AI slop" can be traced to several factors. The democratization of powerful AI tools, which require minimal technical expertise, has lowered the barrier to content creation significantly. This, coupled with the algorithmic emphasis on quantity and engagement metrics, inadvertently incentivized the mass production of easily digestible, often superficial content. Users, accustomed to personalized feeds, began reporting a noticeable decline in the quality and authenticity of the content presented to them, leading to widespread disillusionment. For Meta, a company that has historically grappled with challenges in content moderation, from misinformation to hate speech, "AI slop" presented a new, insidious layer of complexity, threatening the very fabric of its user experience.

Meta’s Proactive Stance: A Chronology of Efforts to Reclaim Quality

Meta’s recent announcements build upon a series of initiatives launched over the past year to address these mounting concerns. In a concerted effort to cleanse its digital environment, the company initiated a significant crackdown in 2025 on spammy and unoriginal content. This broad offensive targeted various forms of content duplication, including the repeated reuse of others’ photos, videos, or text without substantial alteration or added value. The stated objective was unequivocal: to elevate original creator content within its feeds and decisively push back against the tide of AI-generated "slop" and other low-quality posts that were demonstrably tarnishing Facebook’s reputation. This earlier crackdown was not merely a cosmetic adjustment but a fundamental reorientation towards content quality, recognizing that a platform saturated with derivative material would inevitably lose its competitive edge and its most valuable assets—its creators and engaged users.

The importance of this strategic shift cannot be overstated for Facebook’s continued success as a leading creator platform. In an environment where unoriginal content and AI slop proliferate unchecked, original voices risk being drowned out, and creators’ ability to monetize their efforts is severely curtailed. If genuine creative output struggles to find an audience or generate sufficient revenue, creators will inevitably seek more hospitable environments, leading to a talent drain that would severely impact Facebook’s long-term vitality. The platform’s commitment to prioritizing original content is thus a critical investment in its future, signaling to creators that their contributions are valued and protected.

Meta has already reported encouraging results from its previous efforts. The company indicated that during the second half of 2025, views of and time spent watching original content on Facebook approximately doubled compared to the same period in 2024. This significant increase suggests that the initial crackdown had a tangible positive impact, validating Meta’s strategy and providing empirical evidence that users respond favorably to a higher standard of content. This data point is crucial, offering a quantifiable measure of success and reinforcing the business case for investing in content quality.

Beyond the issue of "slop," Meta has also made substantial progress in combating impersonation, a pervasive problem that directly undermines creators and user trust. The company reported the removal of 20 million accounts last year that were found to be impersonating legitimate users or public figures. Furthermore, there was a commendable 33% drop in the number of impersonation reports specifically targeting large creators, indicating improved detection and enforcement mechanisms are beginning to yield results for high-profile accounts often targeted by malicious actors. These figures highlight the scale of the challenge and the effectiveness of Meta’s dedicated resources in this area.

Enhanced Tools and Sharpened Definitions for a Clearer Digital Space

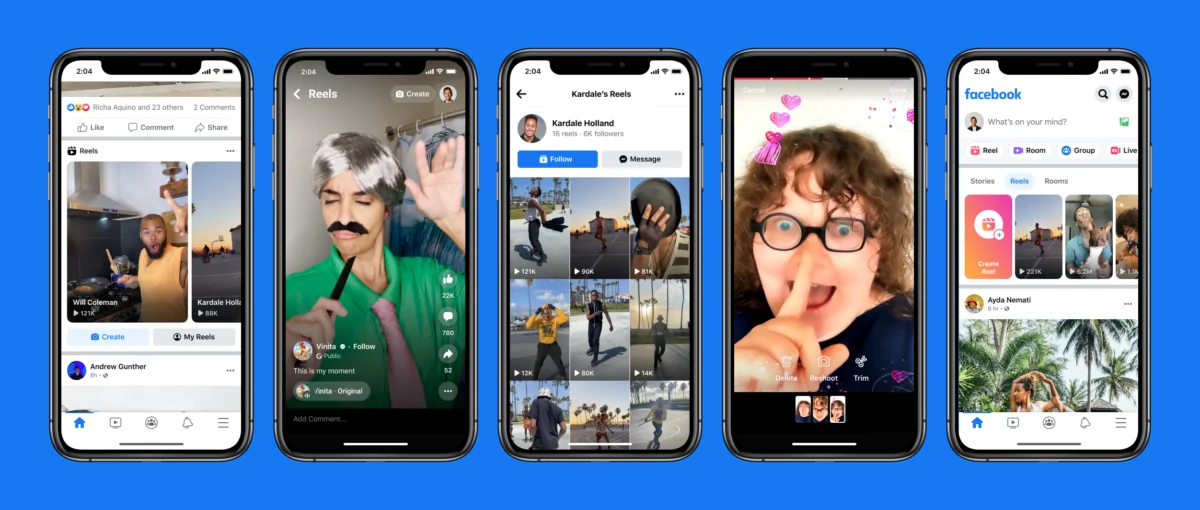

In its latest offensive, Facebook is currently testing significant enhancements to its content protection tools, designed to empower creators further. These advanced tools enable creators to take swift action when their original Reels — a popular short-form video format — are detected across Facebook’s various platforms after being published by impersonators. The system centralizes the reporting process, allowing creators to flag infringing content from a single, intuitive dashboard. With the upcoming update, Meta aims to streamline this reporting process even further, making it exceptionally easy for creators to submit comprehensive reports all in one dedicated place. This centralized approach is expected to significantly reduce the administrative burden on creators, allowing them to focus more on their craft and less on policing unauthorized use of their work.

However, the existing content protection framework, while effective for direct duplication, primarily focuses on matching identical content. A critical area that still requires addressing is the unauthorized use of a creator’s likeness, often facilitated by increasingly sophisticated deepfake technology. This distinction is crucial, as a deepfake might not be an exact duplicate of a creator’s original video but rather a synthetically generated piece of content that falsely depicts them. The challenge of detecting such nuanced forms of impersonation presents a more complex technical hurdle, one that Meta and the broader industry are actively grappling with. The current tools are a strong foundation, but the evolving nature of AI-driven deception necessitates continuous innovation in detection capabilities. Industry experts suggest that the development of robust deepfake detection requires advanced machine learning models trained on vast datasets of both authentic and synthetic media, a computationally intensive and constantly evolving field.

Industry-Wide Challenge: The Broader Landscape of AI Content Moderation

The struggle against AI-generated misinformation and impersonation is not unique to Meta; it is an industry-wide battle. This week, another major digital content platform, YouTube, also announced its intention to expand its AI deepfake detection tools. YouTube’s initiative specifically targets content depicting politicians, public figures, and journalists, acknowledging the significant societal risks associated with the malicious use of AI to generate deceptive media involving influential individuals. This parallel action by a key competitor underscores the severity and pervasiveness of the problem, highlighting a collective industry imperative to protect the integrity of digital content and the public discourse. The collaborative efforts, or at least concurrent developments, across major platforms signal a growing recognition that this challenge requires robust, multi-faceted solutions, often involving partnerships with research institutions and AI ethics organizations.

Defining Originality: New Guidelines for a Fairer Ecosystem

A cornerstone of Meta’s updated strategy involves refining Facebook’s content guidelines to provide an unambiguous definition of "original content." This clarity is paramount for both creators and the algorithms tasked with promoting or demoting content. The updated guidelines now explicitly state that "original" content includes material that is "filmed or produced directly by a creator." This emphasizes authentic, first-hand creation. Furthermore, it acknowledges the legitimacy of "reels that remix other content or use overlays to present something new," provided they incorporate elements like "analysis, discussion, or new information." This distinction is vital for fostering a culture of transformative creativity rather than mere replication. It supports creators who build upon existing works to offer fresh perspectives, critical commentary, or educational insights, thereby enriching the platform’s content ecosystem. This careful nuance aims to differentiate genuine creative remixing from mere content scraping.

Conversely, the updated guidelines also precisely delineate what will be deemed "unoriginal" and, consequently, deprioritized by Facebook’s algorithms. This category now includes content that involves "minor edits to a creator’s work or is duplicative of that." The policy explicitly targets "re-uploads or other low-value changes, like adding borders or captions," asserting that such superficial modifications will no longer be sufficient to differentiate unoriginal content from its source. This stringent definition aims to eliminate the "slop" that floods feeds with near-identical versions of popular content, forcing creators to genuinely innovate if they wish to gain visibility. The implications for content strategists and aspiring viral video producers are clear: true originality and substantive value addition are now paramount. This shift signals a move away from an engagement-at-all-costs mentality towards one that prioritizes authentic, high-quality content.

Broader Implications and the Road Ahead

The broader implications of Meta’s intensified crackdown are far-reaching. For the creator economy, these changes represent a crucial validation of their work and a commitment to creating a fairer playing field. By prioritizing original content and combating impersonation, Meta aims to ensure that creators receive due credit, audience reach, and the monetization opportunities they deserve. This, in turn, can incentivize higher quality content production, fostering a more vibrant and diverse ecosystem. However, the implementation and enforcement of these guidelines present significant technical and logistical challenges. The sheer volume of content uploaded to Facebook daily necessitates sophisticated AI detection systems, which themselves must constantly evolve to keep pace with the ingenuity of those seeking to circumvent the rules. The "arms race" between platform security and malicious actors is an ongoing reality, demanding continuous investment in research and development.

From a user perspective, the initiatives promise a more engaging and trustworthy experience. A cleaner feed, less cluttered with repetitive or deceptive content, could lead to increased satisfaction and time spent on the platform. For advertisers, a higher quality content environment means their messages are more likely to appear alongside reputable and engaging material, enhancing brand safety and campaign effectiveness. However, the challenge of maintaining accuracy in AI detection, particularly concerning satire, parody, or nuanced forms of commentary, will require careful calibration to avoid inadvertently stifling legitimate creative expression. Digital rights advocates will likely monitor these developments closely, ensuring that content moderation policies do not become overly broad or punitive, potentially leading to the suppression of valid critical content. The balance between strict enforcement and safeguarding free expression remains a delicate act.

In conclusion, Meta’s recent announcements mark a pivotal moment in its ongoing battle against digital degradation. By investing in advanced impersonation detection tools, refining its content protection mechanisms, and articulating a clearer definition of "original content," the company is making a decisive stand against the "AI slop hellscape" that has threatened its platform. These efforts, combined with earlier crackdowns, demonstrate a robust commitment to fostering a high-quality, creator-centric environment. While the road ahead is fraught with challenges, particularly in the ever-evolving landscape of generative AI and deepfake technology, Meta’s proactive stance is a critical step towards safeguarding the integrity of its platform, empowering its creators, and ultimately enhancing the user experience for billions worldwide. The success of these initiatives will not only determine Facebook’s future but also set a precedent for how major digital platforms navigate the complex ethical and practical implications of the AI revolution.