Roblox, the immensely popular online gaming and creation platform, announced on Monday the introduction of new account types specifically designed to provide age-appropriate access to its vast ecosystem of chat and games for children and younger teens. This significant structural overhaul, set to roll out globally at the beginning of June, marks a proactive response to growing regulatory pressure, a series of high-profile lawsuits, and increasing public scrutiny regarding child safety within the digital realm. The initiative builds upon the mandatory age verification system implemented across the platform in January, which will now serve as the foundational technology for assigning users to their respective new account tiers. Users who opt not to complete the age verification process will automatically be restricted to a curated selection of games deemed suitable for younger audiences.

Background: A Platform Under Scrutiny

Roblox’s decision to segment its user base by age is not an isolated development but rather the culmination of years of escalating concerns and legal challenges surrounding the safety of its predominantly young user base. With an average of over 70 million daily active users (DAU) as of late 2025, a significant portion of whom are under the age of 16, Roblox has long grappled with the inherent complexities of moderating a user-generated content platform at scale. Its open-ended nature, which allows users to create and share their own games and experiences, while fostering immense creativity, has also presented significant moderation challenges.

The platform’s growth trajectory has been meteoric since its inception in 2004, transforming from a niche online game into a cultural phenomenon and a publicly traded company with a market capitalization often exceeding tens of billions of dollars. This rapid expansion, however, brought with it increased attention to the potential risks faced by its youngest users. Critics and child safety advocates have consistently highlighted vulnerabilities, including exposure to inappropriate content, cyberbullying, and, in more severe cases, online grooming.

A critical turning point arrived in 2024 and 2025 with a wave of adverse publicity and legal action. Investigative reports, notably one from Bloomberg, illuminated allegations of a "pedophile problem" on the platform, detailing instances where young users were allegedly exposed to sexually explicit content and grooming attempts. This was further exacerbated by a damning report from Hindenburg Research, an investment research firm known for its short-seller reports, which accused Roblox of failing to adequately protect its young users and prioritizing growth over safety.

These reports catalyzed legal action from state attorneys general. In August 2025, the Attorney General of Louisiana filed a lawsuit against Roblox, alleging violations of consumer protection laws and a failure to safeguard children from predatory behavior. This was followed by a similar lawsuit from the Texas Attorney General in November 2025, explicitly accusing Roblox of "prioritizing pixel pedophiles over child safety." These legal challenges underscored the urgent need for more robust protective measures and a more transparent system for managing age-appropriate access.

Chronology of Key Safety Initiatives

The new account types represent a significant escalation in Roblox’s efforts to address these criticisms, building upon a series of recent policy and technological advancements:

- January 2026: Roblox implemented mandatory age checks for all users globally who wished to access chat features. This was a foundational step, establishing the technology and user compliance necessary for a tiered account system. Prior to this, age verification was optional or required for certain features.

- April 2026 (Last Week): Roblox launched its new subscription service, "Roblox Plus," priced at $4.99 per month. This service, which offers exclusive benefits and discounts, is now also a prerequisite for developers under 16 years old to participate in the enhanced screening process for games targeting younger audiences. The existing "Roblox Premium" subscription will cease accepting new sign-ups by April 30.

- Beginning of June 2026: The new age-gated account types – Roblox Kids, Roblox Select, and Standard Roblox – are scheduled for global rollout. Roblox has committed to providing a "transition period" to allow existing users to complete the necessary age verification process.

This timeline demonstrates a clear strategic shift by Roblox towards a more stringent, age-segregated platform experience, driven by both internal commitment to safety and external regulatory and legal pressures.

The New Age-Gated Account Tiers Explained

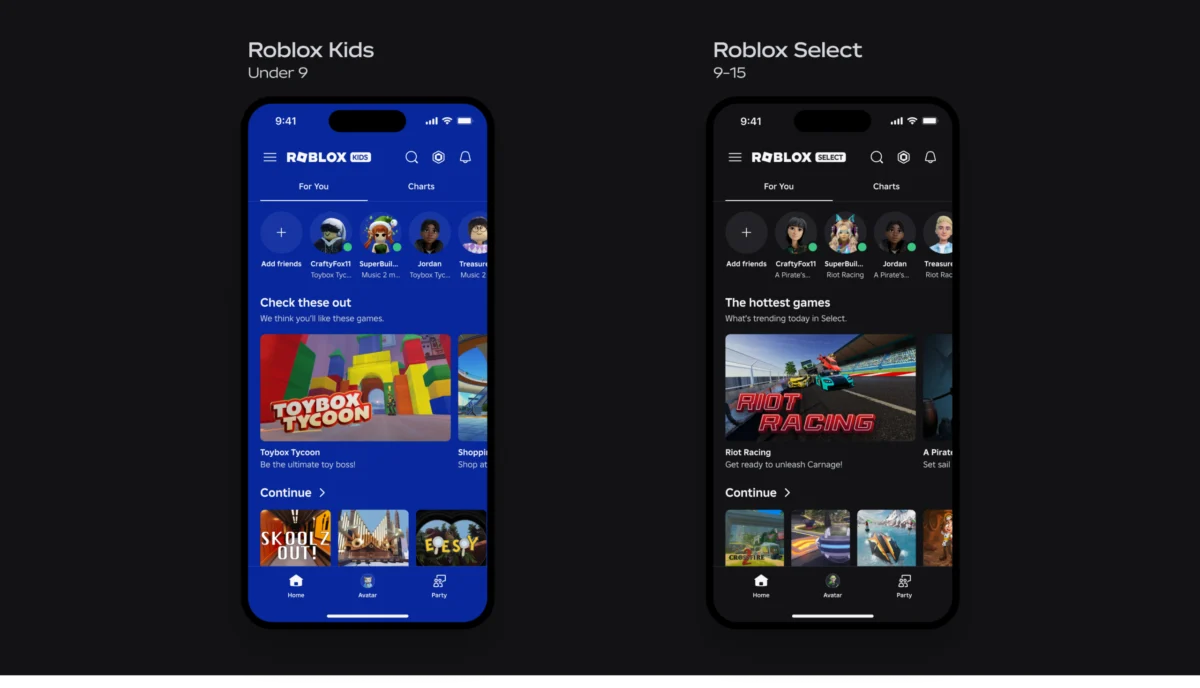

The core of Roblox’s new strategy lies in its three distinct account types, each tailored to specific age demographics and offering varying levels of access to content and communication features:

-

Roblox Kids Accounts (Ages 5-9):

- Content Access: Users in this category will be restricted to games with "Minimal" or "Mild" content ratings. These ratings denote experiences that may feature occasional mild violence, mild crude humor, and infrequent mild fear, but are generally considered safe and appropriate for young children.

- Chat Features: Crucially, chat will be off by default for all Roblox Kids accounts. This significantly limits unsolicited communication, addressing a major concern for parents. The only way for a child in this tier to communicate with another user is if their parent or guardian explicitly selects and approves specific individuals their child is permitted to chat with. This "whitelist" approach provides parents with granular control over their child’s social interactions on the platform.

-

Roblox Select Accounts (Ages 9-15):

- Content Access: Users in the Roblox Select tier will have access to a broader range of content, including games rated up to "Moderate." This category may include more frequent or intense instances of mild violence, some fantasy violence, slightly more pronounced crude humor, and potentially mild suggestive themes, but still within a generally acceptable range for pre-teens and early adolescents.

- Chat Features: These users will be able to chat with other users within a similar age group. While specific details on the scope of this "similar age group" filter are pending, it is expected to prevent older users from easily communicating with younger teens, adding another layer of protection.

-

Standard Roblox Accounts (Ages 16 and Up):

- Content Access: Users aged 16 and above will operate under a standard Roblox account, granting them access to the vast majority of experiences on the platform. However, a significant distinction is made for "Restricted Content."

- Restricted Content (Ages 18+): Only users who are 18 years or older will be able to access "Restricted Content." This category is defined as experiences that may include strong violence, mature romantic themes, strong language, and other content generally deemed unsuitable for minors. This final tier ensures that truly adult-oriented experiences, which may exist on the platform, are strictly segregated from underage users.

A notable feature across all tiers is the parental override option. Parents retain the ability to approve specific games for their children, even if those games fall outside the default restrictions of their child’s account type. For example, if a younger child with a Roblox Kids account wishes to play a game with an older sibling that is rated for a Roblox Select account, a parent can grant explicit permission, demonstrating a balance between automated protection and parental discretion.

Mandatory Age Verification: The Foundation of the System

The efficacy of this tiered account system hinges entirely on Roblox’s mandatory age verification technology. Introduced in January 2026, this system requires users to provide a government-issued ID (like a driver’s license or passport) and a live selfie, which is then analyzed using facial recognition technology to confirm age. This process, which Roblox asserts is robust and privacy-preserving, is designed to be the gateway for assigning users to the correct age group and unlocking appropriate content and features.

The previous optional nature of age verification meant many younger users could potentially bypass content restrictions. By making it mandatory for accessing chat and for gaining full, age-appropriate access to the platform’s offerings, Roblox is creating a much more rigid and enforceable framework. Users who do not complete the age verification will, by default, be placed into the most restrictive category, ensuring a baseline level of safety.

Enhanced Content Moderation and Game Screening Criteria

Beyond the age-gated accounts, Roblox is implementing a comprehensive three-step screening process for games to be made available to younger users on Roblox Kids and Roblox Select accounts. This multi-layered approach aims to proactively filter out inappropriate content before it reaches vulnerable audiences.

-

Developer Verification: The first line of defense involves the developers themselves. To be eligible for their games to be considered for younger audiences, developers must meet stringent requirements:

- If under 16, they must complete ID verification or maintain a connection to a linked parent account.

- Their Roblox account must have two-step verification enabled, adding a security layer against unauthorized access.

- Developers must have an active Roblox Plus subscription. This new requirement, tied to the $4.99 monthly service, suggests that Roblox is not only enhancing safety but also potentially leveraging it to drive subscription uptake and incentivize responsible development.

-

Real-Time Evaluation and AI Moderation: After developer verification, eligible games undergo a rigorous evaluation process. Roblox will deploy users aged 16 and older to play and assess these games in real-time. These testers will submit feedback and reports, which will be crucial in evaluating the overall experience, content appropriateness, and potential risks before the games are released to younger users.

- Complementing human review, Roblox will also utilize its "multimodal moderation system." This advanced AI-driven system can analyze various forms of content within games—visuals, audio, text, and gameplay mechanics—to detect violations of its community standards and identify potentially problematic elements. This combines the nuanced understanding of human testers with the speed and scalability of artificial intelligence.

-

Specific Gameplay Criteria: Finally, games must adhere to specific gameplay criteria and possess the appropriate content maturity label to be accessible by younger users. This ensures that the game’s core mechanics, themes, and interactions align with the "Minimal," "Mild," or "Moderate" ratings as defined by Roblox. This step acts as a final qualitative check to ensure alignment with the new age-tier content guidelines.

Broader Implications and Industry Context

Roblox’s new measures are set to have far-reaching implications for its vast ecosystem of users, developers, and parents. For users, particularly children, the changes promise a significantly safer and more curated online experience, reducing exposure to content and interactions that are not age-appropriate. This could enhance parental trust and potentially broaden Roblox’s appeal to families previously hesitant due to safety concerns.

For developers, the new requirements present both opportunities and challenges. While the stringent screening process and subscription requirements might initially seem burdensome, creating content specifically for the "Roblox Kids" and "Roblox Select" tiers could open up new revenue streams and a more dedicated audience segment. The emphasis on safety might also encourage more developers to adhere to best practices, ultimately improving the overall quality and safety of content on the platform. However, it also means developers must carefully consider their target audience and adjust their content creation and monetization strategies accordingly. The requirement for a Roblox Plus subscription for certain developers also links the safety initiatives to the company’s broader business model.

For parents, these changes represent a significant step towards greater control and peace of mind. The clear age segmentation, default chat restrictions, and the ability to whitelist contacts or approve specific games empower parents to tailor their child’s Roblox experience to their family’s values and comfort levels. This could be a powerful differentiator for Roblox in a competitive landscape where child online safety is a paramount concern for guardians.

Industry experts and child safety advocates are likely to view these changes with cautious optimism. While the measures are comprehensive and address many long-standing issues, the practical implementation and ongoing enforcement will be critical. The sheer scale of Roblox’s user-generated content platform means that vigilance and continuous adaptation will always be necessary.

This move also positions Roblox more firmly within a broader global trend of increased regulation and industry self-regulation concerning online child safety. Laws like the Children’s Online Privacy Protection Act (COPPA) in the United States and the Age-Appropriate Design Code (often referred to as GDPR-K) in the UK and Europe have pushed platforms to adopt more robust protections for minors. Roblox’s proactive restructuring demonstrates an intent to align more closely with these regulatory expectations, potentially mitigating future legal challenges and fostering a more sustainable, trusted platform for its young demographic.

The global rollout of these new account types in June 2026 will be a pivotal moment for Roblox, testing the effectiveness of its new systems and its commitment to fostering a truly safe and enriching environment for its youngest players. The success of this initiative will not only redefine the user experience on Roblox but could also set a new standard for how large-scale user-generated content platforms manage child safety in the evolving digital landscape.