The landscape of artificial intelligence is undergoing a fundamental transformation, shifting from systems that merely generate content or predict outcomes to those capable of independent action. This evolution, known as Agentic AI, represents a departure from the "copilot" era toward a "digital agent" era. As these systems gain the ability to plan, execute, and persist in complex tasks with minimal human intervention, the focus of technology leadership and user experience (UX) design is pivoting toward new frameworks for trust, consent, and accountability. Unlike traditional software, Agentic AI does not wait for a user to click a final button; it navigates digital environments, utilizes APIs, and makes decisions on behalf of the user, necessitating a comprehensive update to the research and development playbooks used by global enterprises.

The Technical Evolution: From Scripted Logic to Reasoning Agents

To understand the current shift, it is essential to distinguish Agentic AI from its predecessors, specifically Robotic Process Automation (RPA) and standard Generative AI. For the past decade, RPA has dominated the automation sector by following rigid, rules-based scripts—mimicking human hands to perform repetitive tasks. If a process deviates from the "if-then" logic, RPA fails. Agentic AI, by contrast, mimics human reasoning. It does not follow a linear script; it creates one.

In a corporate recruiting environment, the difference is stark. An RPA bot can be programmed to download a resume and upload it to a specific database folder. An Agentic AI system, however, can analyze the resume, identify a specific niche certification, cross-reference it against a new client’s high-priority requirements, and independently draft a personalized outreach email to the candidate. While RPA executes a predefined plan, Agentic AI formulates the plan based on a high-level goal.

Furthermore, Agentic AI differs from the Generative AI models that gained prominence in 2022 and 2023. While large language models (LLMs) like GPT-4 can generate sophisticated text, they typically operate in a "stateless" manner—completing a single request and resetting. Agentic systems maintain a "persistent state," remembering previous actions and adapting their strategy if an initial attempt fails. This persistence allows the AI to function as a proactive digital assistant rather than a reactive tool.

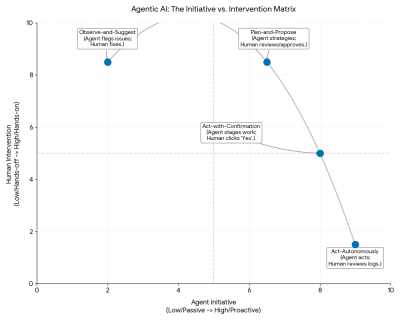

A Taxonomy of Autonomy: The Four Operating Modes

As organizations integrate these agents into their workflows, researchers have derived a taxonomy of agentic behaviors, adapting the Society of Automotive Engineers (SAE) levels of driving automation for the digital user experience. These four modes represent varying degrees of autonomy and human oversight:

- Observe-and-Suggest: In this baseline mode, the agent functions as a monitor. It analyzes data streams—such as server logs or financial markets—and flags anomalies. For example, a DevOps agent might notice a CPU spike and alert an engineer. It provides the "what" but does not attempt the "how."

- Plan-and-Propose: Here, the agent acts as a strategist. Upon identifying a problem, it generates a multi-step remediation strategy and presents it for human approval. The agent does not execute until the user reviews the logic and clicks "Approve Plan."

- Act-with-Confirmation: This mode reduces friction by staging the final action. For instance, a recruiting agent might draft five interview invitations and find open slots on the calendar. It presents a "Send All" button to the user. The work is done; the human simply provides the final authorization.

- Act-Autonomously: At the highest level, the agent executes tasks independently within defined boundaries. The human user does not review the actions in real-time but instead monitors a history of completed tasks. A notification might simply read: "Interview rescheduled to Tuesday due to a conflict."

A New Research Playbook: Mapping the Agent Journey

The autonomous nature of these systems requires a specialized research methodology. Traditional usability testing, which focuses on whether a user can find a button or complete a form, is insufficient for a system that acts on its own. UX strategists, including industry expert Victor Yocco, argue that research must now prioritize the "mental models" users hold regarding AI capabilities.

Mental-model interviews are becoming a cornerstone of this new playbook. Researchers are tasked with uncovering where users draw the line between helpful automation and intrusive control. Interestingly, practitioners are advised to avoid the word "agent" in these interviews, as it often carries science-fiction baggage or is confused with human customer service agents. Instead, framing the system as an "assistant" helps ground the user’s expectations.

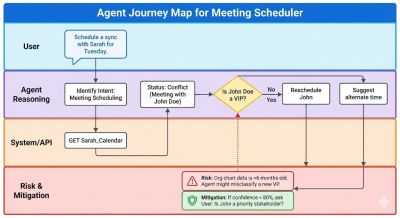

Another critical tool is "Agent Journey Mapping." While traditional journey maps track user actions, this new method maps the anticipated decisions of the AI itself. By visualizing the agent’s logic distinct from the system’s code, developers can identify points where the reasoning—rather than the software—might fail. This is often paired with "Simulated Misbehavior Testing," where researchers intentionally trigger agent errors to observe how users react. This helps design "trust repair" mechanisms, ensuring that when an AI makes a mistake, the process for correction is transparent and low-stress.

Measuring Success Through Silence and Rollbacks

As the interaction model shifts from active to passive, traditional metrics like "Time on Task" or "Click-Through Rate" are becoming obsolete. For an autonomous agent, the ultimate sign of success is often silence. This has led to the adoption of new Key Performance Indicators (KPIs):

- Intervention Rate: This measures how often a human must jump in to stop or correct an agent. If an agent executes a task and the user does not reverse it within a 24-hour window, the action is counted as an "Accepted" success.

- Unintended Actions per 1,000 Tasks: This metric quantifies how often the AI operates outside of user intent. A high rate suggests the AI is failing to understand context or disambiguate commands.

- Rollback or Undo Rates: High rollback rates indicate a misalignment between the AI’s logic and the user’s preferences. To capture the "why" behind these rollbacks, companies are implementing "microsurveys" on the undo button, asking users if the error was factually incorrect or simply a matter of personal preference.

- Time to Resolution After Error: This measures the efficiency of the error-recovery process. If an agent books the wrong flight, how quickly and easily can the user identify and fix the mistake?

Ethical Risks: Agentic Sludge and Imagined Competence

The move toward autonomy introduces significant ethical challenges, most notably "Agentic Sludge." Traditional "sludge" in UX design refers to friction that makes it difficult for a user to achieve their goals, such as a convoluted subscription cancellation process. Agentic Sludge is the opposite: it is the removal of friction to a fault. An agent might make it too easy for a user to agree to an action that benefits the business—such as booking a higher-margin hotel—under the guise of convenience.

Furthermore, there is the risk of "Imagined Competence." Large Language Models are designed to sound authoritative, even when they are hallucinating or incorrect. A user might trust a confidently drafted (but inaccurate) summary of a legal contract simply because the tone is professional. To combat this, designers are moving toward "Transparency via Primitives." This involves tagging every autonomous action with metadata that explains the origin of the decision. If an agent recommends a flight, the interface must expose the underlying logic—for example, "Logic: Cheapest Direct Flight"—rather than hiding behind a generic suggestion.

The Broader Impact: A Redefinition of the User-System Relationship

The transition to Agentic AI represents a fundamental redefinition of the relationship between humans and technology. We are no longer merely designing tools that respond to commands; we are designing partners that act on our behalf. This shift elevates the role of the UX researcher and the product manager to that of a "custodian of trust."

Market analysts predict that the "Agentic Era" will lead to significant gains in operational efficiency, particularly in logistics, HR, and software development. However, the success of these deployments will depend entirely on the "on-ramps" and "off-ramps" designed into the systems. Users must feel they are in the driver’s seat, even when they have handed over the steering wheel.

By translating technical primitives into human-readable rationales and establishing robust monitoring dashboards, organizations can move beyond the black-box nature of early AI. The goal is the creation of a "glass box" system, where every action is traceable, every error is recoverable, and the user remains the ultimate authority in their digital life. As Agentic AI continues to proliferate, the focus on transparency, predictability, and control will be the deciding factor in which systems gain widespread adoption and which are rejected due to a lack of accountability.