The digital landscape is currently witnessing a critical divergence between the sophistication of global search engines and the stagnation of internal site-search functionality, a phenomenon increasingly known as the Site-Search Paradox. Despite the availability of advanced data analytics and machine learning tools, many enterprise and e-commerce websites continue to provide internal search experiences that mirror the rudimentary indexing systems of the 1990s. This failure in Information Architecture (IA) forces users to abandon local site navigation in favor of global search engines, often using the "site:" operator to find content on a specific domain that the domain’s own search bar failed to retrieve. Industry analysts suggest that this trend represents more than a technical glitch; it is a fundamental breakdown in User Experience (UX) design that directly impacts conversion rates and brand loyalty.

The Historical Context of Web Navigation and Search

To understand the current crisis in internal search, it is necessary to examine the chronological evolution of web navigation. In the early 1990s, the World Wide Web was primarily navigated through hierarchical directories. Search bars were considered a luxury, implemented only when a site’s content grew beyond the capacity of a standard "click-to-navigate" menu. These early search tools functioned as literal digital indices, much like the back of a physical textbook. They relied on exact string matching, meaning a user had to input the precise terminology used by the site’s creators to find a result.

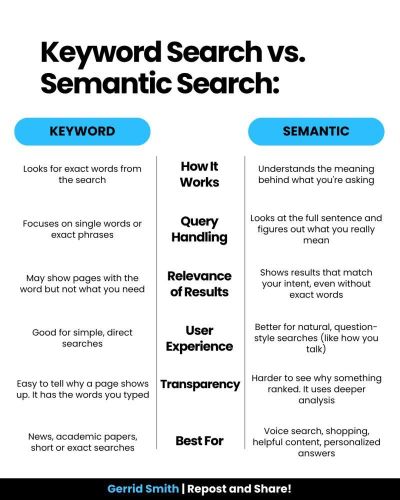

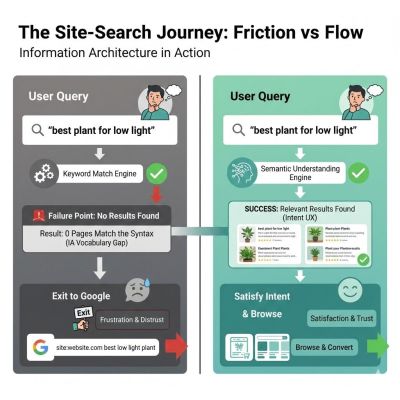

By the early 2000s, Google began to redefine user expectations by moving away from literal keyword matching toward semantic understanding. While global search engines evolved to interpret intent, internal site search remained largely tethered to database-driven exact matches. This created a widening gap in user behavior. Today, the modern user has been psychologically "rewired" by twenty-five years of sophisticated search experiences. When a user lands on a website today, they rarely attempt to learn the site’s specific taxonomy or organizational logic. Instead, research indicates that approximately 50% of users navigate directly to the search bar as their primary mode of interaction. When that search bar fails to accommodate natural language, typos, or synonyms, the user experience collapses, leading to what is known as the "Syntax Tax."

The Mechanics of the Syntax Tax and Information Architecture Failure

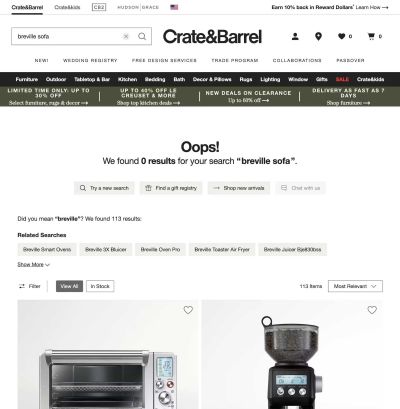

The "Syntax Tax" refers to the cognitive load and frustration imposed on users when a system requires them to guess the specific internal vocabulary of an organization. This issue is rooted in a failure of Information Architecture—specifically, the preference for "strings" over "things." In a string-based system, the search engine looks for a literal sequence of characters. If a user searches for a "sofa" on a furniture site that has categorized its inventory under "couches," a string-based engine will return a "0 Results Found" screen.

This creates a digital dead end. From a psychological perspective, the user does not assume they need to try a synonym; they conclude the site does not carry the product. According to data from the Baymard Institute, 41% of e-commerce sites fail to support basic symbols or abbreviations in their search queries. Furthermore, many internal systems lack support for "stemming" and "lemmatization"—IA techniques that allow a search engine to recognize that "running," "runs," and "ran" all stem from the same root intent. Without these technical foundations, an internal search engine treats "Running Shoe" and "Running Shoes" as entirely different entities, effectively punishing the user for natural linguistic variations.

Supporting Data and the Economic Impact of Search Failure

The implications of the Site-Search Paradox are measurable in financial terms. Forrester Research has noted that users who utilize a site’s search function are two to three times more likely to convert than those who simply browse, provided the search results are relevant. Conversely, approximately 80% of users will exit a site if the internal search results are poor. This high abandonment rate underscores the importance of search as a critical touchpoint in the customer journey.

A case study involving a large-scale technical enterprise illustrates the tangible benefits of resolving IA issues. The organization maintained over 5,000 technical documents but suffered from an internal search exit rate that hindered productivity. An audit revealed that the search engine was indexing documents by their internal SKU numbers (e.g., "DOC-9928-X") rather than human-readable titles. While users were searching for terms like "installation guide," the engine found no matches because those terms were not in the title tags. By implementing a "Controlled Vocabulary"—a standardized set of terms mapping SKUs to human language—the organization saw a 40% drop in search page exit rates within 90 days. This fix was not algorithmic but structural, proving that a search engine is only as effective as the metadata scaffolding it rests upon.

The Internal Language Gap and the Curse of Knowledge

A recurring challenge identified by UX professionals is the "Internal Language Gap," often exacerbated by the "curse of knowledge" within corporate environments. Internal teams frequently become so immersed in their own jargon that they lose sight of how an outsider describes their products or services.

For instance, a prominent financial institution reported high call volumes to its support center regarding "loan payoffs." An analysis of search logs revealed that "loan payoff" was the most frequently searched term that yielded zero results. The institution’s IA team had labeled all relevant documentation under the formal legal term "Loan Release." Because the search engine was programmed for literal string matching, it could not bridge the gap between the user’s common terminology and the bank’s official nomenclature. By adding "loan payoff" as a hidden metadata keyword to the "Loan Release" pages, the institution resolved a multi-million dollar support issue without requiring a faster server or a more complex algorithm. The solution was an "empathetic taxonomy" that prioritized user intent over corporate formality.

Probabilistic Design: Moving Beyond Binary Search Results

Traditional Information Architecture often operates on a binary logic: a result is either a match or it is not. However, modern search theory suggests that users expect a "probabilistic" approach, where results are displayed based on confidence levels. This is the "UX of Maybe."

In a probabilistic model, the "No Results Found" page is replaced by a "Did You Mean?" state or a "Fuzzy Match" interface. If a user searches for an item in a specific category and fails to find an exact match, a well-designed IA will suggest related items in adjacent categories. For example, if a search for a specific electronic component yields no results, the system might state, "We didn’t find that in ‘Hardware,’ but we found 3 matches in ‘Accessories’." This keeps the user within the site’s ecosystem and maintains the flow of the interaction, preventing the pivot to a global search engine.

The Risks of Outsourcing: The Google-Powered Search Bar Debate

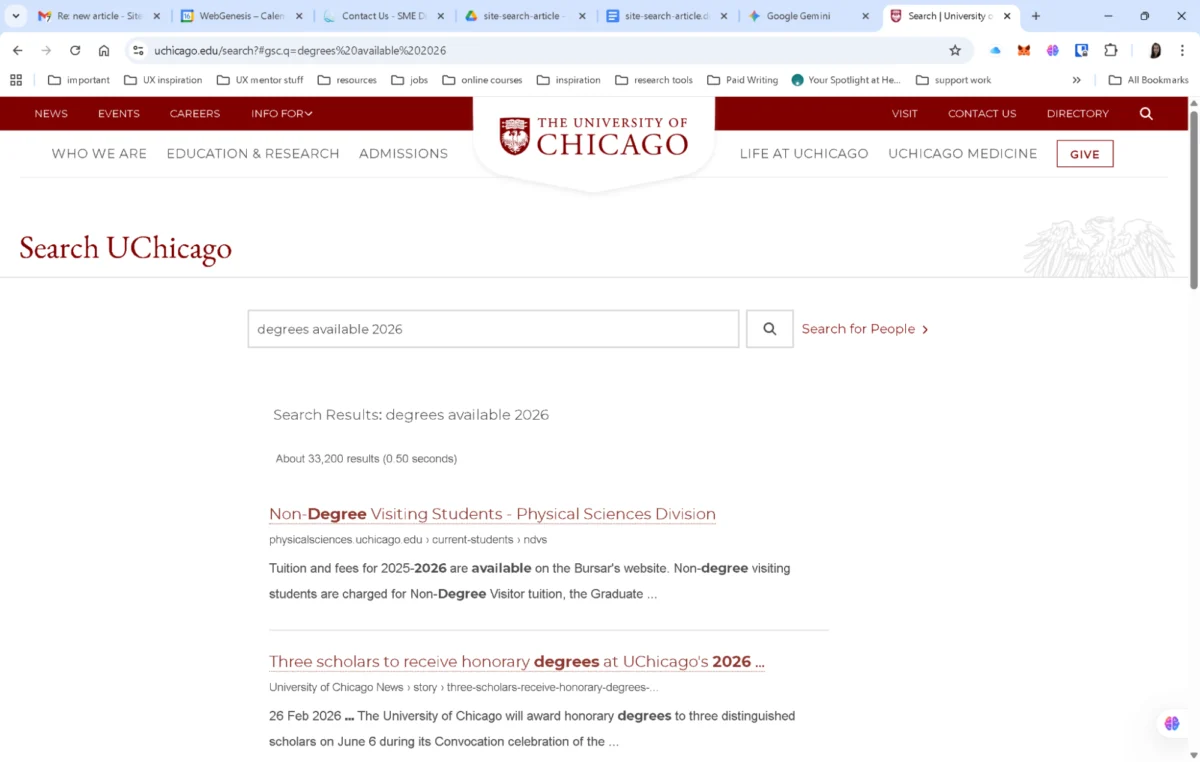

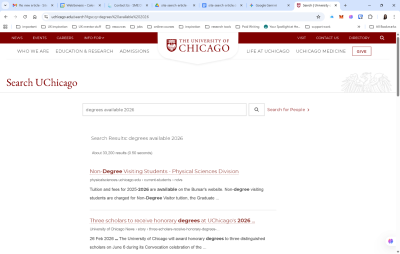

In response to the difficulties of maintaining internal search, some organizations, such as the University of Chicago, have implemented "Google-powered" search bars. While this provides an immediate upgrade in algorithmic power, industry experts warn that it represents an admission of organizational complexity that the site’s own navigation cannot handle.

Outsourcing the search experience to a third-party algorithm like Google’s Site Search (or its successors) involves significant trade-offs. Organizations lose the ability to promote specific content, products, or internal priorities. Furthermore, it often exposes users to third-party advertisements and trains them to leave the site’s ecosystem the moment they encounter a hurdle. For a business, search should ideally function as a curated conversation that guides the customer toward a specific goal. Delegating this to an external entity turns a potential conversion opportunity into a generic list of links that may lead the user directly to a competitor’s site.

A Framework for Reclaiming the Internal Search Experience

To combat the Site-Search Paradox, UX and IA professionals are increasingly adopting a four-phase audit framework designed to treat search as a living product rather than a static utility.

- The Zero-Result Audit: This involves a 90-day review of search logs to identify queries that returned no results. These are typically categorized into "Synonym Gaps" (users using different words), "Content Gaps" (users looking for things the site doesn’t have), and "Format Gaps" (users looking for specific document types).

- Query Intent Mapping: Analysts categorize the top 50 queries into Navigational, Informational, or Transactional intents. A navigational search (e.g., "login") should ideally bypass the results page and take the user directly to the destination.

- The Fuzzy Matching Test: This technical audit involves intentionally mistyping top products and using regional spelling variations (e.g., "color" vs. "colour"). If the system fails to return results, it indicates a lack of stemming support, requiring a technical intervention.

- Scoping and Filtering UX: This phase ensures that the filters provided on the results page are contextually relevant. A generic filter is often as ineffective as no filter at all; a search for "shoes" should trigger filters for size and color, while a search for "laptops" should trigger filters for processor speed and RAM.

Broader Implications for the Future of UX

The continued failure of internal site search carries broader implications for the future of digital commerce and information retrieval. As voice search and AI-driven conversational interfaces become more prevalent, the demand for semantic understanding will only increase. Organizations that continue to rely on literal string matching will find themselves increasingly invisible to their own customers.

Success in the modern digital era is no longer defined solely by the volume of content an organization produces, but by the "findability" of that content. The search bar is the only location on a website where a user provides direct, unprompted feedback on their needs in their own words. Failing to interpret those words is a failure of empathy and strategy. By shifting from literal indexing to human-centered Information Architecture, businesses can close the gap between user intent and digital results, reclaiming the search box from global engines and fostering a more direct, effective relationship with their audience.