The modern user experience is increasingly defined not by the volume of content a platform hosts, but by the efficiency with which that content can be retrieved. Despite the proliferation of sophisticated data management tools and expansive digital libraries, internal site search mechanisms frequently fail to meet basic user expectations, creating a phenomenon known as the Site-Search Paradox. This paradox describes a scenario where users, unable to navigate a local website’s internal architecture, abandon the site to use global search engines like Google to find specific pages on that very same domain. This systemic failure in internal Information Architecture (IA) is driving a significant shift in digital strategy as organizations struggle to retain users within their own ecosystems.

The history of the web reveals a fundamental shift in how search bars are perceived and utilized. In the early iterations of the internet, a search bar was considered a secondary luxury, typically implemented only after a site became too large for traditional hierarchical navigation. These early systems functioned much like a printed book index, relying on literal, alphabetical string matching. If a user did not input the exact terminology used by the author, they were met with a "0 Results Found" notification—a digital dead end. Twenty-five years later, while user behavior has been fundamentally rewired by the sophisticated algorithms of global search providers, many internal search bars still operate on these 1990s-era logic models, punishing users for common linguistic variations or minor typographical errors.

The Rise of the Search-First User

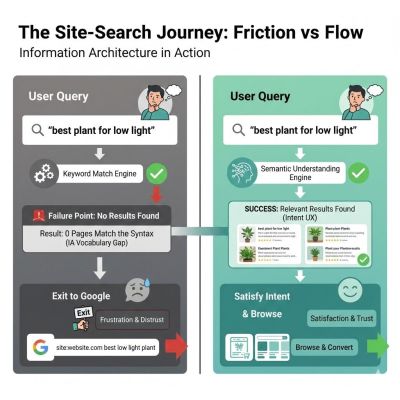

The behavioral patterns of contemporary web users have undergone a radical transformation. Research conducted by Origin Growth into the "Search vs. Navigate" dichotomy indicates that approximately 50% of users now bypass global navigation menus entirely, heading straight for the search box upon landing on a site. This "search-first" mentality places an immense burden on the internal search engine. When a user enters a query and the system fails to deliver relevant results, the user rarely concludes that they should attempt a different synonym. Instead, the prevailing psychological response is that the site simply does not possess the required information or product.

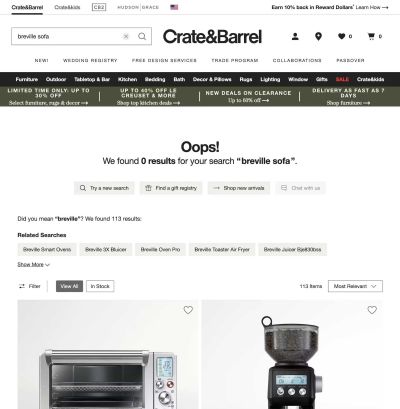

This disconnect is often the result of what experts term the "Syntax Tax." This refers to the cognitive load placed on a user when they are required to guess the specific string of characters used in a site’s database. For instance, if a furniture retailer categorizes items under the term "couches" but a user searches for "sofa," a string-based search engine will return no results. This is a failure of Information Architecture; the system is designed to match literal sequences of letters rather than the conceptual "things" behind those words. By forcing users to adopt a specific corporate vocabulary, organizations are effectively taxing the brainpower of their customers, leading to immediate site abandonment.

The Economic Impact of Poor Findability

The consequences of search failure are not merely aesthetic or navigational; they are financial. Data from the Baymard Institute reveals that 41% of e-commerce sites fail to support even basic symbols or abbreviations in their search queries. This technical oversight leads to high abandonment rates, as users increasingly lack the patience to navigate around a site’s internal limitations.

According to research from Forrester, users who utilize the search function on a website are two to three times more likely to convert than those who do not, provided the search functions correctly. Conversely, approximately 80% of users on e-commerce platforms will exit a site following a poor search experience. When an internal search box fails, users often resort to a "site:domain.com [query]" search on Google. In the worst-case scenario, the user may simply perform a general search and land on a competitor’s website, representing a total loss of the customer acquisition investment.

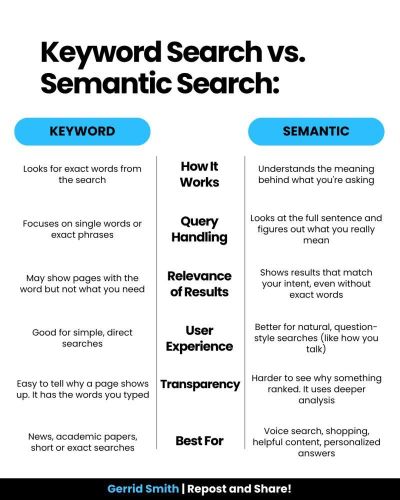

Technical Barriers: String Matching vs. Semantic Intent

The primary technical divide between successful global search engines and failing internal systems lies in the transition from keyword matching to semantic understanding. Google’s dominance is not solely a product of raw processing power but of its mastery of contextual Information Architecture. Global engines utilize advanced techniques such as stemming and lemmatization—linguistic processes that recognize "running," "ran," and "runs" as variations of the same intent.

Most internal search engines remain "blind" to this context. To a primitive search algorithm, "Running Shoe" and "Running Shoes" are entirely different entities. If the database only contains the singular form, a search for the plural may yield no results. This lack of "fuzzy matching" creates a binary user experience: either an exact match is found, or the user is told nothing exists. Modern UX standards demand a shift toward probabilistic results, where the system provides "confidence levels" and suggests "Did you mean?" alternatives rather than delivering a cold "No Results Found" screen.

Case Analysis: The Internal Language Gap

A recurring theme in the failure of internal search is the "curse of knowledge" within corporate teams. Internal stakeholders often become so immersed in business jargon that they forget the end-user does not share their technical vocabulary.

In one notable case study involving a major financial institution, the organization experienced a surge in support center calls from customers who claimed they could not find "loan payoff" information on the company website. An analysis of the search logs revealed that "loan payoff" was the most frequently searched term that resulted in zero hits. The failure was rooted in the site’s Information Architecture: the internal team had labeled all relevant documentation under the formal legal term "Loan Release." To the bank, a "payoff" was a process, while the "Loan Release" was the actual document stored in the database. Because the search engine was looking for literal character strings, it could not bridge the gap between the user’s intent and the company’s official terminology.

A similar issue was identified at a large-scale enterprise managing over 5,000 technical documents. The internal search engine was returning irrelevant results because the "Title" tag for every document was an internal SKU number (e.g., "DOC-9928-X") rather than a human-readable description. Users searching for "installation guide" were ignored by the engine because that phrase did not appear in the SKU-based metadata. By implementing a Controlled Vocabulary—a standardized set of terms mapping SKUs to human language—the organization saw a 40% drop in search page exit rates within three months. These examples underscore that search performance is often an IA problem rather than an algorithmic one.

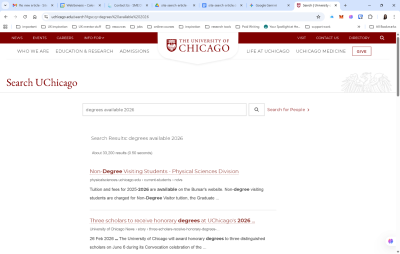

The Google Dilemma: Surrendering the Ecosystem

In response to these challenges, some large institutions, such as the University of Chicago, have opted to integrate "Google-powered" search bars directly into their sites. While this provides an immediate fix for findability, it is often viewed by UX professionals as a white flag of surrender. By delegating search to a third-party algorithm, an organization loses control over the user experience.

Relying on external search engines within a local site means the organization can no longer promote specific products or prioritize certain content based on business goals. Furthermore, it exposes users to third-party advertisements and trains them to leave the organization’s ecosystem the moment they require assistance. For a business, search should be a curated conversation that guides a customer toward a specific goal, not a generic list of links that pushes them back toward the open web.

A Methodological Approach to Search Auditing

To reclaim the search box from global engines, IA professionals are adopting rigorous audit frameworks. This process involves moving away from a "set it and forget it" mentality and treating search as a living product.

- The Zero-Result Audit: Organizations are now routinely pulling search logs to analyze queries that returned no results. These are typically categorized into "Synonyms" (words the site uses differently), "Typos" (human error), and "Missing Content" (actual gaps in the site’s offerings).

- Query Intent Mapping: By analyzing the top 50 most common queries, designers can determine if users are seeking specific pages (Navigational), "how-to" guides (Informational), or products (Transactional). A sophisticated UI will look different for each intent, potentially bypassing the results page entirely for navigational searches.

- Fuzzy Matching Verification: Technical teams are increasingly testing systems against intentional mistypes, plurals, and regional spelling variations (e.g., "color" vs. "colour"). If a system fails these tests, it indicates a lack of stemming support, which is now considered a baseline requirement for modern web search.

- Scoping and Filtering UX: Effective search must include filters that are contextually relevant. If a user searches for "shoes," the interface should provide filters for size and color. Generic, site-wide filters are often as unhelpful as having no filters at all.

Broader Impact and the Future of Search

The shift from literal string matching to semantic understanding represents a broader movement toward "associative" search, which mimics the way the human brain functions. This involves creating "semantic scaffolding" where a search for a product returns not just a purchase link, but also related manuals, FAQs, and spare parts.

The ultimate goal for modern Information Architecture is to transition the search bar from a "Librarian" to a "Concierge." While a librarian provides the exact location of a requested item, a concierge listens to the underlying intent and provides a recommendation. Predictive text is being used not just to complete words, but to suggest intentions.

In conclusion, the search box remains the only place on a digital platform where the user explicitly states their needs in their own words. Failing to understand those words is more than a technical glitch; it is a failure to understand the customer. By implementing robust, human-centered Information Architecture and moving toward semantic scaffolding, organizations can close the findability gap and ensure that the "Big Box" of global search does not become the only way users can navigate the local web. Success in the modern digital landscape is no longer about the volume of content, but the ease with which it can be found.