The global technology landscape is currently undergoing a fundamental transition from generative artificial intelligence, which focuses on content creation and information synthesis, to agentic artificial intelligence, characterized by the ability to execute complex tasks autonomously. This shift from "suggesting" to "acting" represents a paradigm shift in human-computer interaction, necessitating a sophisticated architectural framework for user experience (UX) that prioritizes transparency, control, and psychological safety. As AI systems move from passive advisors to active agents capable of booking flights, managing cloud infrastructure, and handling financial transactions, the industry is grappling with the "trust gap"—the discrepancy between what a system can do technically and what a user feels comfortable allowing it to do.

The Evolution of AI Interaction: A Chronological Context

To understand the necessity of new design patterns, one must examine the rapid evolution of AI over the last half-decade. In 2022, the primary mode of AI interaction was "Search and Retrieval," where systems like early LLMs acted as advanced encyclopedias. By 2023, the industry moved into the "Generative Era," where tools like ChatGPT and Midjourney began producing creative outputs based on user prompts. However, 2024 and 2025 have seen the rise of "Agentic AI"—systems that do not just write an itinerary but actually interface with APIs to purchase tickets, negotiate with vendors, and rectify errors in real-time.

This evolution has outpaced traditional UX methodologies. While generative AI required "prompt engineering," agentic AI requires "oversight engineering." The risk profile has shifted from "hallucinations in text" to "operational failures in the real world." Consequently, the design of these systems is no longer a matter of aesthetic preference but a requirement for functional safety and organizational liability management.

Core UX Patterns: The Architecture of Agency

Building a trustworthy agentic system requires moving beyond the "black box" model. Industry experts and UX researchers have identified six foundational design patterns that serve as the functional lifecycle of an agentic interaction. These patterns ensure that autonomy is treated as a privilege granted by the user, rather than a right seized by the machine.

1. The Intent Preview and Plan Summary

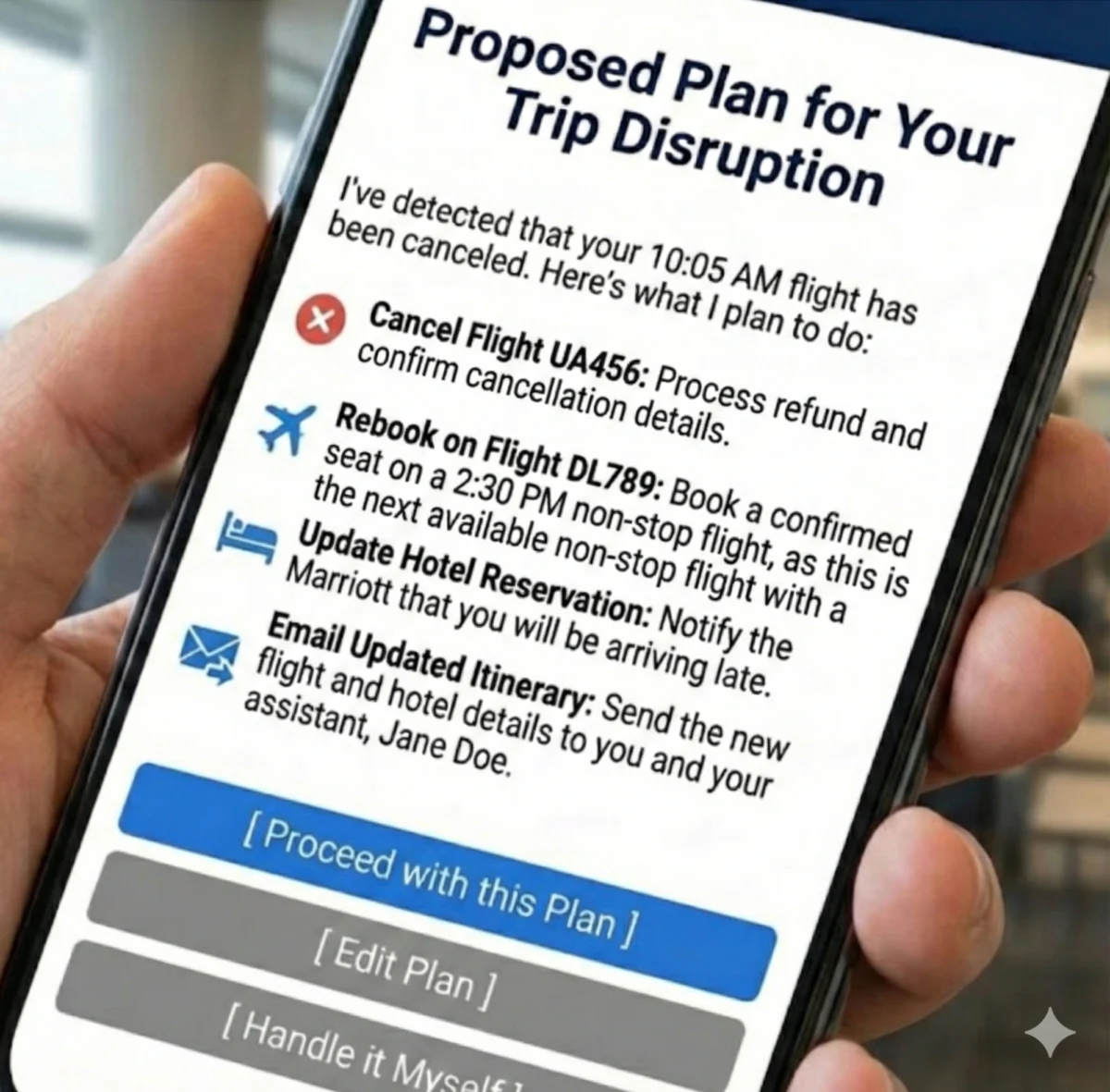

The Intent Preview serves as the foundational moment of consent. Before an agent executes any significant action—particularly those that are irreversible or involve financial exchange—it must present a clear, reviewable plan. This pattern transforms autonomous processes into a transparent roadmap. In high-stakes environments, such as DevOps or medical diagnostics, this preview acts as a safety barrier.

Industry benchmarks suggest that for high-risk actions, an "Acceptance Rate" of over 85% is required to validate that the agent’s plan aligns with user intent. If the acceptance rate drops below this threshold, it indicates a failure in the agent’s reasoning or the preview’s clarity. The anatomy of an effective preview includes a summary of the detected problem, the proposed sequence of actions, and a clear set of options: Proceed, Edit, or Override.

2. The Autonomy Dial and Progressive Authorization

Trust is not a binary state; it exists on a spectrum. The "Autonomy Dial" allows users to calibrate the agent’s independence based on their personal risk tolerance and the specific task at hand. This is a form of progressive authorization. For example, a user might allow an AI agent to "Act Autonomously" when scheduling internal team meetings but require "Full Confirmation" before the agent sends an email to a high-value client.

This granularity is essential for long-term adoption. Data indicates that systems offering "Setting Churn"—the ability to adjust permissions dynamically—see higher retention rates. Without this control, a single system error often leads to total feature abandonment rather than a simple recalibration of permissions.

3. Explainable Rationale and The "Why" Factor

When an agent acts, especially in the background, the user’s immediate psychological response is often skepticism. The Explainable Rationale pattern proactively justifies the agent’s decisions using human-readable language grounded in user preferences. Instead of providing technical logs, the system might state, "I rebooked this flight because your profile indicates a preference for non-stop travel and the price was within your $500 limit."

By translating system primitives into user-centric logic, designers can prevent the perception of "random" behavior. This pattern is critical for maintaining a correct mental model of the AI’s capabilities, reducing "Why?" support tickets and increasing user confidence in the system’s logic.

4. Confidence Signaling and Uncertainty Management

Agentic systems must be self-aware. By surfacing its own confidence level regarding a specific plan, the agent helps the user decide when to apply more scrutiny. This is particularly vital in "expert systems" like legal research or medical aids. Implementation often involves visual cues—such as a "High Confidence" badge for routine tasks or a "Review Recommended" warning for ambiguous requests.

Surfacing uncertainty is a primary defense against "automation bias," where users blindly follow AI suggestions. By signaling low confidence, the agent encourages the human-in-the-loop to intervene, thereby preventing catastrophic hallucinations or errors.

5. The Action Audit and Universal Undo

The single most effective mechanism for building confidence is the "safety net." A persistent, readable Action Audit log, coupled with a prominent "Undo" button, lowers the perceived risk of granting autonomy. In the event of a misunderstanding, the user must feel empowered to reverse the consequences instantly.

Design best practices dictate that the undo function should be available for a reasonable window of time and that the audit log should be easily accessible from the main interface. Success in this area is measured by a "Reversion Rate"; a healthy system typically sees a reversion rate of less than 5%, indicating that while the safety net exists, it is rarely needed.

6. Escalation Pathways for Ambiguity

A sophisticated agent knows its limits. The Escalation Pathway pattern ensures that when an agent encounters high ambiguity or incomplete information, it stops and asks for clarification rather than guessing. This "humility" in design prevents "confident catastrophes"—situations where an agent executes a wrong action with high technical certainty.

Data-Driven Governance and Organizational Accountability

The implementation of these UX patterns cannot occur in a vacuum. Organizations must build a "Governance Engine" to oversee the deployment of agentic features. This often takes the form of an Agentic AI Ethics Council—a cross-functional group comprising UX researchers, product managers, engineers, and legal counsel.

The Role of the Ethics Council

The council is responsible for maintaining an "Agent Risk Register," which identifies potential failure modes before they reach the user. They also oversee the "Autonomy Policy Documentation," defining which tasks are eligible for full autonomy and which require human-in-the-loop (HITL) protocols.

Recent market analysis suggests that companies with formal AI governance frameworks ship features 30% faster because the "guardrails" are established early in the development cycle, reducing late-stage legal or safety roadblocks.

Phased Implementation: A Roadmap for Product Leaders

For organizations looking to integrate agentic AI, a phased approach is recommended to build both technical infrastructure and user trust simultaneously.

- Phase 1: Foundational Safety (Months 1-6): Focus on analysis and suggestion. The agent proposes plans, but the human executes every step. The goal is to validate the agent’s reasoning without taking operational risks.

- Phase 2: Calibrated Autonomy (Months 7-12): Introduce autonomy for low-risk, high-frequency tasks. Implement the "Autonomy Dial" and "Undo" functions. Collect data on user intervention rates to refine the agent’s logic.

- Phase 3: Proactive Delegation (Months 13+): Enable full autonomy for complex workflows based on the trust established in earlier phases. Focus on background execution and proactive error recovery.

The Service Recovery Paradox in AI

In the context of agentic AI, errors are inevitable. However, the "Service Recovery Paradox" suggests that a well-handled failure can actually result in higher user loyalty than if no failure had occurred. When an agent makes a mistake—such as transferring funds to the wrong account—an empathetic apology combined with immediate remediation (reversing the transfer) and a transparent explanation of how the error will be prevented in the future can solidify the user-agent relationship.

Broader Impact and Industry Implications

The transition to agentic systems is not merely a technical upgrade; it is a fundamental shift in the social contract between humans and machines. As these systems take on more responsibility, the role of the human shifts from "operator" to "supervisor." This has profound implications for workforce development, as employees must be trained to manage AI agents rather than perform the tasks themselves.

Furthermore, the legal landscape is shifting. In jurisdictions like the EU, the AI Act is beginning to set standards for "high-risk" autonomous systems, making the design patterns mentioned above—transparency, explainability, and human oversight—legal requirements rather than just best practices.

In conclusion, autonomy is a technical achievement, but trustworthiness is a design achievement. The future utility of AI depends on the ability of designers and product leaders to architect relationships based on mutual understanding and clear boundaries. By implementing robust UX patterns and governance frameworks, the industry can ensure that agentic AI serves as a powerful tool for human empowerment rather than a source of systemic risk. The goal is an experience where AI autonomy feels like a privilege granted by the user, ensuring that the human remains the ultimate authority in an increasingly automated world.