The technological landscape is currently undergoing a fundamental transition from generative artificial intelligence, which focuses on content creation and suggestion, to agentic artificial intelligence, characterized by the ability to execute complex tasks autonomously. As software evolves from a tool that assists to an agent that acts, the industry faces a critical challenge: ensuring these systems remain transparent, controllable, and worthy of human trust. Industry experts assert that while autonomy is a technical achievement, trustworthiness is a deliberate output of the design process. To navigate this shift, product leaders and user experience (UX) professionals are adopting a new framework of design patterns and organizational governance to mitigate the risks of "agentic sludge"—the accumulation of unrequested or poorly executed autonomous actions.

The Evolution of AI Interaction: From Suggestion to Agency

The trajectory of artificial intelligence over the last decade has moved through distinct phases. The initial "Predictive Era" focused on recommendation engines and data sorting. This was followed by the "Generative Era," catalyzed in late 2022, where systems like Large Language Models (LLMs) became adept at synthesizing information and generating text or imagery. We have now entered the "Agentic Era," where AI systems are integrated with APIs and external tools to perform real-world actions, such as booking travel, managing cloud infrastructure, or executing financial transactions.

Unlike generative systems, which require a human to review and "copy-paste" output, agentic systems operate with a degree of delegated authority. This leap demands a psychological and methodological shift. If a chatbot provides a wrong answer, the cost is often minor; if an autonomous agent incorrectly cancels a flight or liquidates a stock position, the consequences are catastrophic. Consequently, the industry is moving toward a "User-Centric Agentic Design" model, which prioritizes human agency over total machine independence.

Core UX Patterns for Maintaining Human Oversight

To manage the transition from machine suggestion to machine action, designers are implementing six core patterns that follow the functional lifecycle of an agentic interaction. These patterns are designed to transform the "black box" of AI logic into a transparent partnership.

1. The Intent Preview and Informed Consent

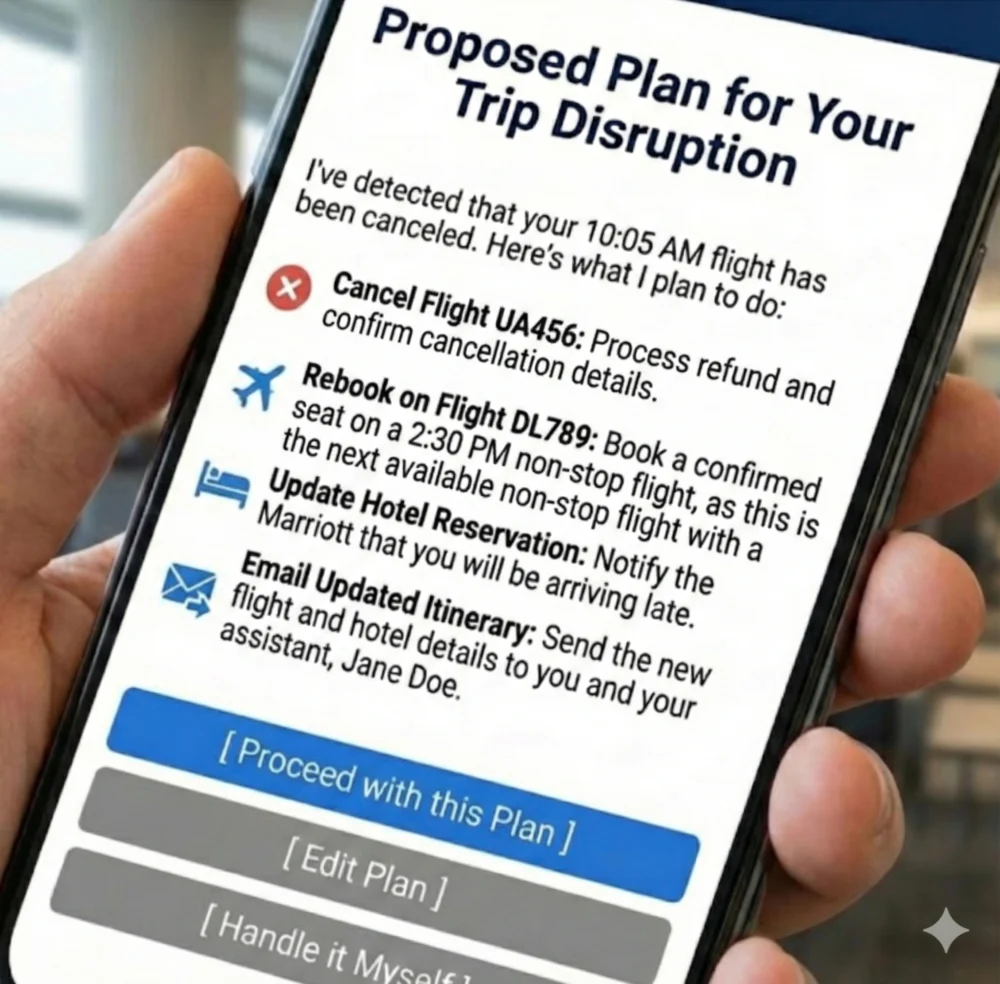

The Intent Preview, also known as a Plan Summary, serves as the foundational moment of seeking consent. Before an agent executes a significant action, it must present a clear, unambiguous roadmap of its intended steps. This pattern addresses the psychological need for "informed consent," ensuring the user is never surprised by the system’s behavior.

In high-stakes environments, such as cloud infrastructure management, an Intent Preview might detail specific technical steps like "Drain Traffic" or "Rollback Instance." For a consumer-facing travel agent, it would outline the specific flight, hotel, and communication steps planned. Industry benchmarks suggest that for irreversible or financial actions, an "Acceptance Rate" of over 85% is required to validate that the agent truly understands user intent. If the rate falls below this, it indicates a disconnect between the agent’s logic and the user’s expectations.

2. Calibrating Trust through the Autonomy Dial

Trust is not a binary state but a spectrum that varies based on the task’s risk. The Autonomy Dial allows users to set the level of independence they grant an agent. This form of progressive authorization moves through three primary settings:

- Suggest Only: The agent provides options but takes no action.

- Propose & Act: The agent formulates a plan and waits for a "Proceed" confirmation.

- Autonomous Execution: The agent acts within pre-defined boundaries and reports back.

By allowing users to tune these settings on a per-task basis—such as allowing an AI to schedule internal meetings autonomously while requiring confirmation for external emails—organizations can match system behavior to individual risk tolerance.

3. The Explainable Rationale: Grounding Actions in Logic

When an agent acts, particularly in the background, the user’s immediate question is "Why?" The Explainable Rationale pattern proactively provides a human-readable justification for the agent’s decisions. This is not a technical log file but a translation of system primitives into user-facing language. For example, instead of citing a "high probability score," the agent might state: "I rebooked this flight because your original departure was canceled and this is the only remaining option that arrives before your scheduled meeting." This transparency prevents the perception of "random" behavior or software bugs.

4. Confidence Signaling and Automation Bias Mitigation

To help users apply the appropriate level of scrutiny, agentic systems must surface their own internal confidence levels. This is critical for preventing "automation bias," the human tendency to over-rely on automated systems even when they are incorrect. Implementation often involves visual cues, such as progress bars or color-coded status indicators (e.g., a yellow warning for a plan formulated with ambiguous data). In expert systems like medical aids or legal assistants, these signals encourage the human operator to double-check high-risk outputs.

5. The Action Audit and the "Undo" Safety Net

The single most effective mechanism for building confidence in autonomous systems is the ability to reverse an action. A persistent Action Audit log provides a historical record of every step taken by the agent. Crucially, each entry should be accompanied by a prominent "Undo" button. Knowing that a mistake is not permanent creates the psychological safety necessary for users to delegate complex tasks. Market data indicates that a "Reversion Rate" of approximately 5% is a healthy sign that users are actively monitoring and correcting the system, whereas a 0% rate may actually suggest a lack of user engagement or hidden errors.

6. The Escalation Pathway for Ambiguity

A sophisticated agent recognizes its own limitations. When encountering ambiguous intent or incomplete data, the system should not guess; it should escalate. This pattern involves the agent pausing its workflow to ask for clarification. By acknowledging its limits, the agent demonstrates a "humility" that paradoxically increases long-term trust. Successful recovery from an escalation point is a key metric, with industry standards targeting a "Recovery Success Rate" of over 90%.

Organizational Governance: The Agentic AI Ethics Council

The implementation of these design patterns requires a robust internal support structure. Leading organizations are establishing "Agentic AI Ethics Councils"—cross-functional alliances comprising UX researchers, product managers, engineers, and legal counsel. The primary goal of this body is to move safety from a final checklist to a foundational strategy.

Key responsibilities of this governance engine include:

- Maintaining an Agent Risk Register: Identifying potential failure modes before deployment.

- Developing Autonomy Policies: Defining which tasks are "off-limits" for full autonomy (e.g., HR terminations or high-value wire transfers).

- Continuous Monitoring: Reviewing Action Audit logs to identify systemic patterns of error or "sludge."

This governance model ensures that innovation does not outpace the organization’s ability to manage brand and operational risk. By formalizing these processes, companies can ship ambitious features with greater speed, knowing the necessary guardrails are in place.

The Service Recovery Paradox in AI

Despite the best design and governance, errors in agentic systems are inevitable. However, research into the "Service Recovery Paradox" suggests that a well-handled error can actually result in higher user loyalty than if no error had occurred. This phenomenon occurs when a system acknowledges a mistake, provides an empathetic apology, takes immediate corrective action, and explains how it will prevent the issue in the future.

In the context of agentic AI, this means designing "Repair and Redress" interfaces that prioritize accountability. An effective apology from an AI agent should be followed by a tangible remediation step, such as a reversed transaction or a corrected itinerary, coupled with a link to human support for further assistance.

A Phased Roadmap for Product Integration

For product leaders, the transition to agentic AI is best approached as a three-phase journey to build both technical capability and user trust:

- Phase 1: Foundational Safety (Suggest & Propose): The agent is limited to analysis and recommendation. This phase focuses on building the "Intent Preview" and "Explainable Rationale" patterns.

- Phase 2: Calibrated Autonomy (Act with Confirmation): The agent begins taking actions but only after explicit user approval. This phase introduces the "Autonomy Dial" and "Confidence Signals."

- Phase 3: Proactive Delegation (Act Autonomously): The agent operates independently within set boundaries, utilizing the "Action Audit" and "Escalation Pathways" to maintain oversight.

Broader Implications: Architecting the Future of Human-AI Relationships

The shift to agentic AI represents a new frontier in human-computer interaction. As these systems move from tools we use to partners we manage, the role of the designer evolves into that of a "relationship architect." The ultimate utility of AI will not be measured solely by its processing power, but by its ability to respect human authority while reducing cognitive load.

By implementing clear patterns for control, designing for graceful failure, and establishing rigorous governance, the industry can ensure that the rise of agentic AI enhances rather than diminishes human agency. The future of autonomous systems rests on the realization that autonomy is a privilege granted by the user, not a right seized by the machine. Through wise design and foresight, agentic AI can become the most powerful lever for human productivity yet devised, provided it remains firmly under the stewardship of those it is intended to serve.